Graduate student Baicen Xiao, Professor and Chair Radha Poovendran and graduate student Hossein Hosseini.

Security researchers in the Department of Electrical Engineering have shown that Google’s new AI tool for videos can be easily tricked by quick video editing. The tool, which uses machine learning to automatically analyze and label video content, can be deceived by inserting a photograph periodically and at a very low rate into videos. After the researchers inserted a quick-playing image of a car into a video about animals, the system returned results suggesting the video was about an Audi instead of animals.

Google recently released its Cloud Video Intelligence API to help developers build applications that can automatically recognize objects and search for content within videos. Automated video annotation would be a breakthrough technology. For example, it could help law enforcement efficiently search surveillance videos, sports fans instantly find the moment a goal was scored or video hosting sites filter out inappropriate content.

Google launched a demonstration website that allows anyone to use the tool. The API quickly identifies and annotates key objects within a video. The API website says the system can be used to “separate signal from noise, by retrieving relevant information at the video, shot or per frame” level.

In a new research paper, doctoral students Hossein Hosseini and Baicen Xiao and Professor Radha Poovendran, demonstrated that the API can be deceived by slightly manipulating the videos. They showed one can subtly modify the video by inserting an image into it, so that the system returns only the labels related to the inserted image.

The same research team recently showed that Google’s machine-learning-based platform designed to identify and filter comments from internet trolls can be easily tricked by typos, misspelling abusive words or adding incorrect punctuation.

“Machine learning systems are generally designed to yield the best performance in benign settings. But in real-world applications, these systems are susceptible to intelligent subversion or attacks,” said senior author Radha Poovendran, chair of the UW electrical engineering department and director of the Network Security Lab in a recent UW Today article. “Designing systems that are robust and resilient to adversaries is critical as we move forward in adopting the AI products in everyday applications.”

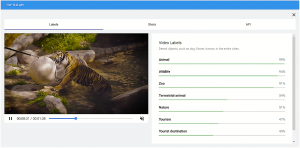

The researchers provided an example of the API’s output for a sample video named “animals.mp4,” which is provided by the API website. Google’s tool does indeed accurately identify the video labels.

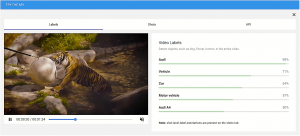

The researchers then inserted the following image of an Audi car into the video once every two seconds. The modification is hardly visible, since the image is added once every 50 video frames, for a frame rate of 25.

The following figure shows a screenshot of the API’s output for the altered video. In this example, the Google tool shows with high confidence that the altered video is mostly about the car, instead of the animals.

“Such vulnerability of the video annotation system seriously undermines its usability in real-world applications,” said lead author and UW electrical engineering doctoral student Hossein Hosseini for the article. “It’s important to design the system such that it works equally well in adversarial scenarios.”

“Our Network Security Lab research typically works on the foundations and science of cybersecurity,” said Poovendran for the article, the lead principal investigator of a recently awarded MURI grant, where adversarial machine learning is a significant component. “But our focus also includes developing robust and resilient systems for machine learning and reasoning systems that need to operate in adversarial environments for a wide range of applications.”

The research is funded by the National Science Foundation, Office of Naval Research and Army Research Office.

– –

This news originally appeared in a UW Today article by Jennifer Langston.

More News:

- UW Today

- The Telegraph

- The Verge

- Association for Computing Machinery

- Motherboard

- Tech Xplore

- The Register

- EurekAlert

- BGR

- Marketing Dive

- AI Business

- Digital Trends

- Quartz

- University Herald

- I-Connect

- Yahoo News

- Gizbot

- iTech

- Bleeping Computer

- Marketing Dive

- Homeland Security News Wire

- myScience

- Euro News

- IT Breaking News

- From Press

- Science and Technology Research News

- Tech2

- technology.org

- Sytec News

- Squip Time

- International Business Times