Abstract

Attention is a critical process in understanding the complicated audio environments in which we operate. There are multiple sounds competing for our attention, and somehow our auditory systems picks out the necessary sounds to understand at least one of the speakers. Our ability to understand any speech in a noisy cacophony is known as the Cocktail Party Effect. We still don’t have good models of how our brains do this task, but an important component of the process is certainly attention. I’d like to talk about three aspects of perception that might help explain how attention mediates our ability to solve the cocktail party problem. 1) Most fundamental is a measure of auditory saliency. How can we build more realistic models of what makes a sound pop out of the background. 2) How can we decide to what sound a subject is attending? We have built a real-time audio attention decoder using EEG signals. 3) How do we model the entire feedback loop, connecting exogenous and endogenous attention into a working system. The latest work was done this past summer at the Telluride Neuromorphic Cognition workshop, where we demonstrated real-time auditory attentional decoding, for the first time ever. A subject listened through headphones to two different speakers reading stories. The subject picked one story to attend to, and based on just 60 seconds of the EEG signals we could determine the attended signal with 90% accuracy. I will describe how we accomplished these tasks.

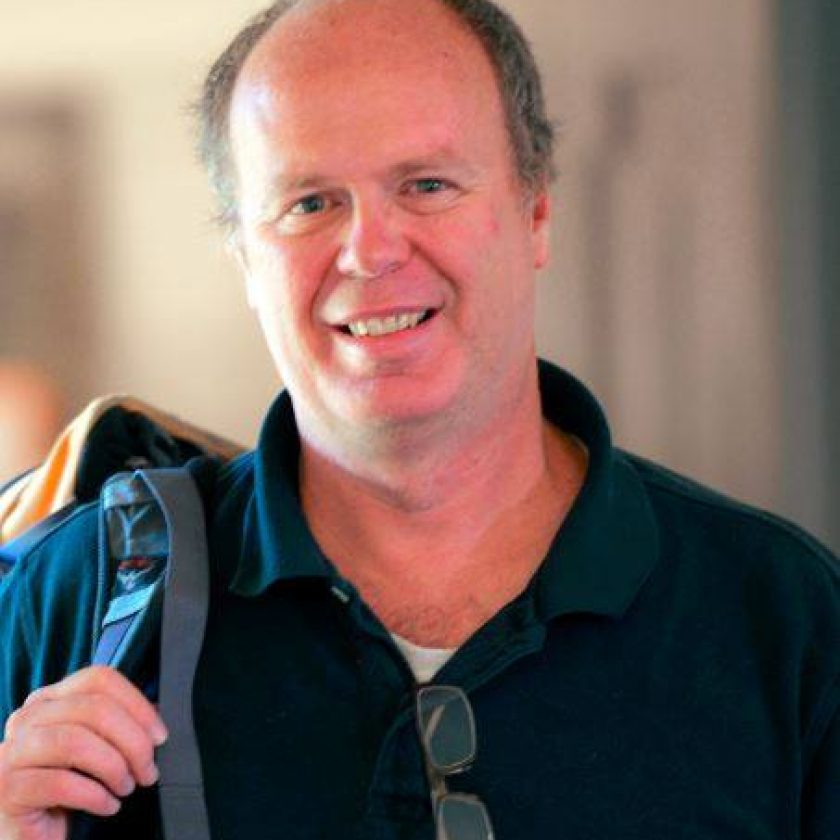

Biography

Malcolm Slaney (Fellow, IEEE) is a Principal Scientist in Microsoft Research’s Conversational Systems Research Center in Mountain View, CA. Before that he held the same title at Yahoo! Research, where he worked on multimedia analysis and music- and image-retrieval algorithms in databases with billions of items. He is also a (consulting) Professor at Stanford University’s Center for Computer Research in Music and Acoustics (CCRMA), Stanford, CA, where he has led the Hearing Seminar for the last 20 years. Before Yahoo!, he has worked at Bell Laboratory, Schlumberger Palo Alto Research, Apple Computer, Interval Research, and IBM’s Almaden Research Center. For the last several years he has helped lead the auditory and attention groups at the NSF-sponsored Telluride Neuromorphic Cognition Workshop. He is a coauthor, with A. C. Kak, of the IEEE book Principles of Computerized Tomographic Imaging. This book was republished by SIAM in their Classics in Applied Mathematics series. He is coeditor, with S. Greenberg, of the book Computational Models of Auditory Function. Prof. Slaney has served as an Associate Editor of the IEEE TRANSACTIONS ON AUDIO, SPEECH, AND SIGNAL PROCESSING, IEEE MULTIMEDIA MAGAZINE, the PROCEEDINGS OF THE IEEE, and the ACM Transactions on Multimedia Computing, Communications, and Applications.