By Wayne Gillam / UW ECE News

A research team led by UW ECE professors Amy Orsborn and Sam Burden has applied game theory to create a new computational framework for designing neural interfaces that adapt to the user. Their work offers a new, principled approach to improving human-machine interaction. Shown above: An experiment participant uses their forearm muscle contractions, with help from a neural interface, to move a cursor (blue dot) toward a target (red dot) onscreen. Photo courtesy of Maneeshika Madduri.

There is an exciting future on the horizon — one in which your thoughts could directly control electronic devices you use every day. In many ways, that future is already here, enabled by neural interfaces — engineered devices designed to exchange information with the body’s nervous system. From consumer wearables to clinical devices, electronics controlled by neural interfaces are making their way into the marketplace and medical practice. These technologies are demonstrating potential for augmenting, and even restoring, human capabilities in profound ways.

For example, electroencephalogram (EEG) headbands, such as Muse and Neurosity Crown, are being used to help people improve mental focus. Electromyography (EMG) wristbands, such as the Meta Neural Band and the Mudra Band for the Apple Watch, can enable hands-free control of electronic devices through subtle finger movements. And implantable neural interfaces, such as the Synchron Stenrode and Neuralink’s Telepathy chip, are allowing paralyzed patients to use neural impulses to control computers, digital devices, and robotic limbs.

But despite these remarkable advances, there is still much work to be done to optimize these technologies and make them useful for large numbers of people. One of the most significant barriers is that neural interfaces need to be customized to some degree for each individual user — because no two brains or bodies are exactly alike.

Customizing the control of a neural interface for the unique brain of each user is advantageous for ensuring desired outcomes and reliable performance. When considering the vast diversity of human nervous systems and the scale required for real-world deployment of this technology, it becomes clear how daunting this challenge can be for scientists and engineers.

To help address this challenge, UW ECE professors Amy Orsborn and Sam Burden are working together to build a strong foundation for engineers developing neural interfaces that can learn from and adapt to individual users.

“Before this study, we couldn’t design co-adaptive neural interfaces from any sort of principled approach. It was always ad hoc. But now, if engineers are designing a system where they anticipate learning on the part of the user and in the neural interface, they will have a framework they can use to design that system.”

— Amy Orsborn, Cherng Jia and Elizabeth Yun Hwang Professor in Electrical & Computer Engineering and Bioengineering

In a recent paper in Nature Machine Intelligence, Orsborn, Burden, and their research team describe a new computational framework for neural interface design that is based in large part on game theory, the mathematical study of strategic interactions among rational decision-makers. In this setting, the “decision-makers” are the human user and the adaptive algorithms embedded within the neural interface itself. And the “game” isn’t about competition — it’s about cooperation. Both the human user and the neural interface continually adjust their strategies, learning from each other to improve performance over time.

Amy Orsborn (left), a Chern Jia and Elizabeth Yun Hwang Professor of Electrical & Computer Engineering and Bioengineering and Sam Burden (right), a UW ECE associate professor. Photos by Ryan Hoover / UW ECE

“This study is perhaps the first to bring game theory, and to a lesser extent, control theory, into neural engineering,” Orsborn said. “It’s one of a very small subset of studies that are trying to use those kinds of computational and mathematical frameworks for this setting.”

Orsborn is a well-known leader in the field of neural engineering, working at the intersection of engineering and neuroscience to develop therapeutic neural interfaces for restoration and rehabilitation of human sensorimotor capabilities. She is a Cherng Jia and Elizabeth Yun Hwang Professor in UW ECE and the UW Department of Bioengineering as well as a faculty member of the Center for Neurotechnology at the UW. She also serves as a scientific adviser for Meta Reality Labs.

Burden is a UW ECE associate professor known for his work discovering and formalizing principles of human sensorimotor control, focusing on applications in robotics, neural engineering, and human-AI interaction.

“The basic idea we had was that game theory could be the right computational framework to get predictions for what the outcome of interactions between the user and the neural interface might be and then be able to shape those outcomes toward a desired end state and better performance,” Burden said. “For example, in a rehabilitation setting, you could design a neural interface that elicits movement or muscle activity that otherwise might be hard to command or instruct somebody to do.”

Developing mathematical and computational frameworks for neural interface design is a long-term effort. Orsborn and Burden said they anticipate continuing this line of research for several years and widening their collaboration to involve other UW ECE faculty.

In this study, their research team was a cross-disciplinary group of UW graduate and undergraduate students. The group included the paper’s lead author and UW ECE alumna Maneeshika Madduri (Ph.D. ECE ‘24), who was a doctoral student at the time the research took place. UW ECE alumna Momona Yamagami (Ph.D. EE ‘22), also a doctoral student at the time of the study, co-authored the paper along with bioengineering doctoral student Si Jia Li and UW alumnus Sasha Burckhardt (B.S. in Neuroscience ‘23), who was an undergraduate student during the study. Orsborn and Burden were senior authors providing oversight and guidance for the research and experiments that took place in their labs.

From theory to practice — an experimental validation

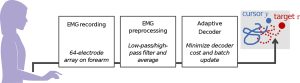

In the research team’s experiment, the participant wears a strip of EMG electrodes on their forearm, secured by tape. The electrodes record muscle activity and send this information to a data processor and an adaptive, algorithmic decoder. The decoder output determines cursor velocity that is then integrated into the system to display cursor position onscreen. Together, the EMG electrode strip, the data processor, and the algorithmic decoder make up the neural interface. Illustration provided by Maneeshika Madduri.

Besides bringing game theory into neural engineering, another thing that made this study unique was the inclusion of an experiment that demonstrated and validated the computational framework developed by the research team. In the experiment, the human participant attempts to control a cursor and follow a target onscreen, using muscle contractions in their forearm. The participant wears a strip of EMG electrodes on the surface of their forearm skin, secured by tape. The electrodes record muscle activity and send this information to a data processor and an adaptive, algorithmic decoder. The decoder output determines cursor velocity that is then integrated into the system to display cursor position onscreen. Together, the EMG electrode strip, the data processor, and the algorithmic decoder make up the neural interface.

This experiment took place in a closed-loop system, considered as such because the human participant sees the cursor and target onscreen and converts what they see into neural signals that travel to their arm, resulting in muscle contractions and electrical signals from motor neurons in the forearm. Those signals are then picked up by the EMG electrodes and sent to the data processor and adaptive decoder, which then resets the cursor position onscreen, closing the information loop.

The neural interface was also considered to be co-adaptive. This is because both the human participant and the algorithms in the neural interface are learning and adapting alongside each other, seeking to accomplish the task and optimize performance together.

“We’re doing human-centered engineering, as opposed to just making a device and then requiring the user to adapt to it. Here, we’re making devices that adapt to you.” — UW ECE Associate Professor Sam Burden

This experiment validated the research team’s new computational framework for closed-loop, co-adaptive neural interfaces, allowing researchers to predict how algorithmic changes would impact the neural interface performance and revealing how various properties of the interface itself could shape or nudge user behavior toward better performance or preferred outcomes.

“Before this study, we couldn’t design co-adaptive neural interfaces from any sort of principled approach. It was always ad hoc,” Orsborn said. “But now, if engineers are designing a system where they anticipate learning on the part of the user and in the neural interface, they will have a framework they can use to design that system.”

What’s next for neural interfaces at UW ECE

A close-up of the EMG electrode strip used by the research team. Photo provided by Maneeshika Madduri.

Next steps for Orsborn and Burden include continuing to build on the computational framework they have developed by testing more machine learning and AI algorithms that lead to distinct, measurable outcomes within the context of neural interface design. They also have discussed bringing AI into the system loop in a more substantive way to enable more intelligent customization of the user experience.

UW ECE has several faculty members who engage in neural engineering research, so Orsborn and Burden are already planning to bring their work on mathematical and computational frameworks for neural interface design to other collaborative projects within the Department. Burden is putting together projects and proposals with UW ECE professors Lillian Ratliff, Kim Ingraham, and Yiyue Luo to further develop these frameworks and apply the research to exoskeletons and wearable technologies. Orsborn is looking forward to building on her current collaboration with UW ECE Associate Professor Eli Shlizerman, who holds a joint appointment in applied mathematics. They are currently conducting studies modeling how a person’s brain changes while using a neural interface.

Orsborn and Burden both said that they were enthusiastic about what the future holds as they build out this body of research in collaborative ways.

“I’m really excited about the future directions that this research opens up — the possibility that we can design these systems to shape positive outcomes for people,” Orsborn said. “That has a huge range of potential applications, which are all very much new and to be explored in how useful or productive they will be, but that’s what gets me really excited because it opens a lot of new doors for exploration.”

Burden added, “I’d also like people to know that we’re doing human-centered engineering, as opposed to just making a device and then requiring the user to adapt to it. Here, we’re making devices that adapt to you.”

Learn more about this topic in the article, “Computational framework to predict and shape human-machine interactions in closed-loop, co-adaptive neural interfaces,” in the journal Nature Machine Intelligence. Research funding was provided by Meta Reality Labs Research and the National Science Foundation.